Five Things: Dec 27, 2025

Congressional hearing, RAISE Act, biology by GPT-5, METR graph, goodbye 2025!

[NOTE: this newsletter covers events from the last two weeks of 2025; the next newsletter will do the same on or about Jan 10th, 2026. Happy holidays/new year!]

Five things that happened/were publicized in the past week in he worlds of biosecurity and technology:

Congressional subcommittee hearing on AI and biosecurity

New York adopts the RAISE Act for AI safety

GPT-5 makes a novel improvement to a standard biology experimental technique

METR time horizons evaluation of Claude Opus 4.5

A few end-of-year takes

1) AI x Biosecurity at the people’s House

In Washington, DC, the Oversight and Investigations Subcomittee (in Energy and Commerce) had a hearing titled Examining Biosecurity at the Intersection of AI and Biology (there is also a separate site with more info by the Committee Democrats, here). You can watch the whole thing on YouTube; as usual, questions vary widely in quality and informativeness.

There is a lot of good info in the written testimonies submitted to the committee by the expert witnesses which you can find linked on the House Democrats site. All of them were real experts with smart things to say.

2) First we take Manhattan (well, second, after California)

NY Governor Kathy Hochul signed the RAISE Act into law. By all accounts, this is very similar to California’s SB-53 and was advocated for by the tech giant’s new favorite punching bag, Assemblymember Alex Bores, who is running for US House next year. To quote the politician:

We defeated last-ditch attempts from an extreme AI super PAC and the AI industry to wipe out this bill and, by doing so, raised the floor for what AI safety legislation can look like. And we defeated Trump’s—and his megadonors—attempt to stop the RAISE Act through executive action.

3) GPT-5 doing (more) biology for real

OpenAI announces that GPT-5 is capable of accelerating actual wet-lab biological research: it suggested protocol modifications that increased cloning and transformation efficiency by ~70 times!

Now, that sounds like a lot; if my bank account increased 70x I think my lifestyle would change drastically. In context though, that is not quite as impressive as it seems, it just means that success rates went from, let’s say, a thousand bacterial cell colonies to 70,000 cell colonies. Which is great, but order-of-magnitude differences are not so uncommon in routine lab work.

The really wild parts of this announcement, to my mind, are the facts that GPT-5 came up with a fairly clever way of achieving this improvement by applying “known” molecular biology facts in a creative way, and that all of the experiments were able to be carried out by robots controlled by the AI model. Video can be seen at the OpenAI announcement link.

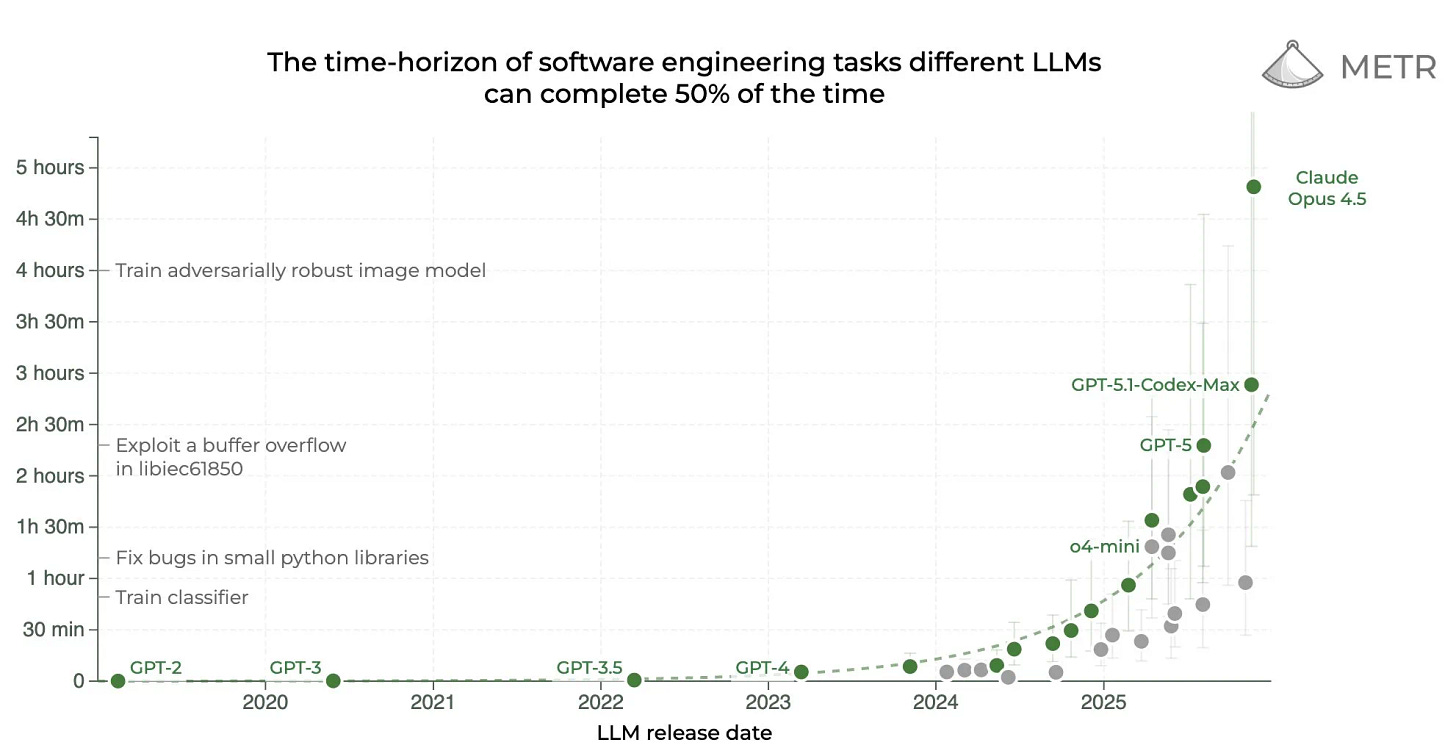

4) Opus 4.5 blows the METR graphs

The METR (Model Evaluation & Threat Research) time horizons evaluation is one of the most important measurements of how good AI is really getting at software engineering. It measures how long an AI system can productively work on a real task without human help, so that you can think of a five-second-task as something like autocomplete for typing the end of your sentence, and a day-long task as… replacing your full time software developer employees? Something like that. This is a much more quantitative and robust way of asking “how well can it code.”

The METR graph shows exponential growth; there is a lot of discussion as to the precise nature of this exponential but the big picture is obvious. METR’s general approach is that AI models that can perform well on 5-hour horizon tasks can basically act as junior developers, modifying large systems, solving sophisticated infrastructure problems—and potentially causing serious damage if “misaligned,” especially if they go off and do these tasks independently for a few hours without human oversight.

SO, here we are, a good way to wrap up 2025: Opus 4.5 is basically at that point. Big picture, it’s hard to know exactly what that means, other than the fact that METR now has to come up with some new tasks if they are going to want to continue testing LLMs in 2026.

5) Goodbye 2025!

Lots of takes - some good, some bad - summing up this past year from the perspective of AI safety. As a “Five Things” newsletter, I feel obligated to have my own list of top five things that happened this year in the world of AI and biosecurity which will posted here when appropriate (after all, you never know what crazy thing will happen on Dec 31st).

Many others do the same: Andrej Karpathy has this excellent ‘year in review’ for example. Dawrkesh Patel offers his thoughts, mainly on the challenges of AI scaling; he seems to have been very influenced by the Richard Sutton interview (but I am hoping that he will be interviewing Fei-Fei Li at some point, who I think has ideas in roughly the same direction but with some important differences). I also liked Eric Schmidt’s recent essay on the “Consensus” (or really, lack thereof) on the state of AI, even though it wasn’t phrased as a “2025 retrospective”.

As usual, I like the framing by Transformer, which has emerged as an excellent, serious, and readable (sorry Zvi) online magazine that still has a lighter side, as exemplified by “The Worst (and Funniest) AI Takes of 2025” and this lovely gift-giving guide to the AI safety-pilled person in your life. Sure, I’ll take a sterling silver paperclip from Tiffany’s!

In Other News…

On AI doing things in the world:

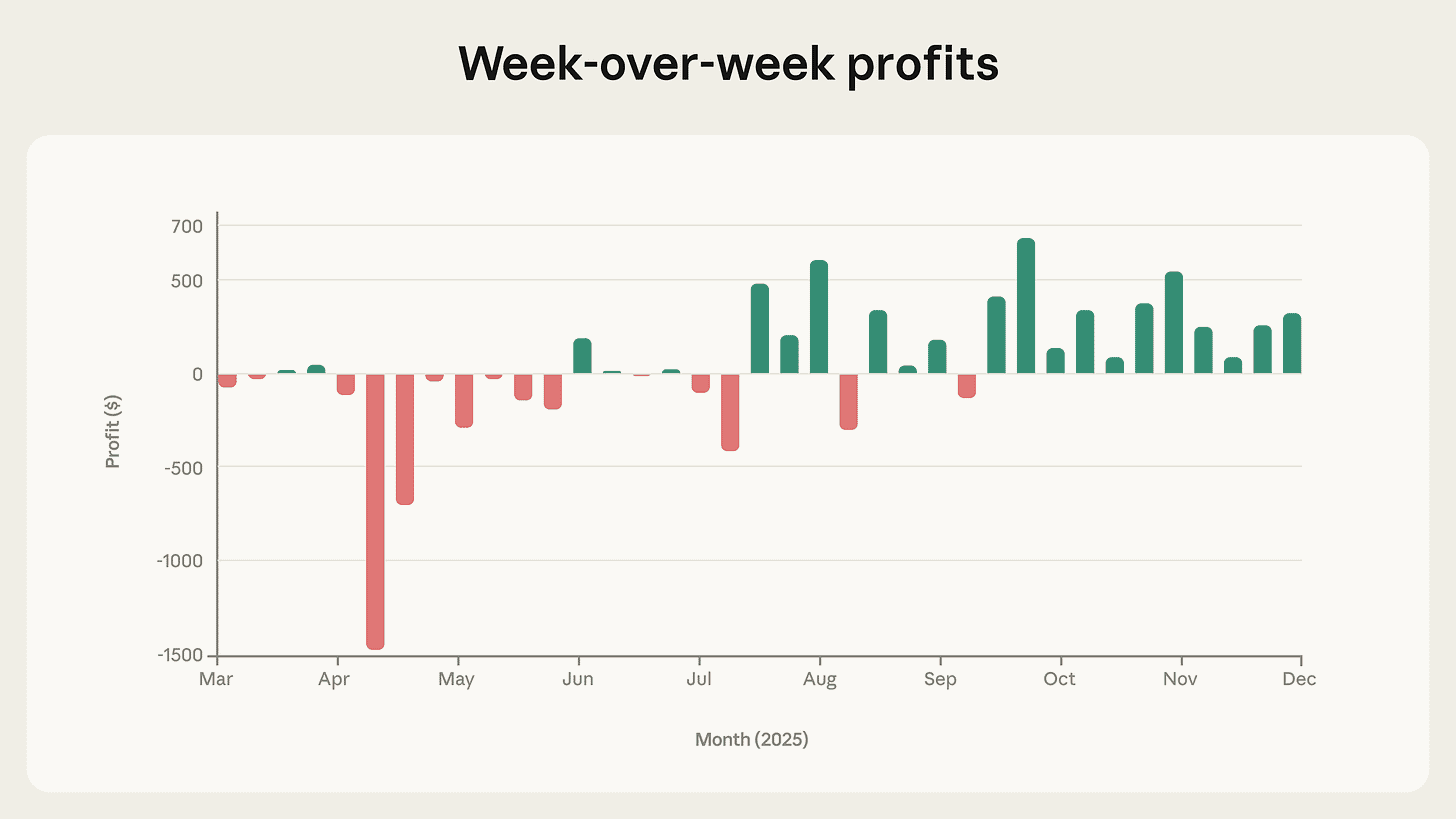

A few months ago, Anthropic announced that they had let Claude operate a vending machine to try and make some money, but it failed spectacularly. The latest update on “Project Vend” is… that it is now turning steady profits! Way to go!

Their article about how they accomplished this is legitimately hilarious; to take one random example, one of the improvements came about because they gave the main agent, whom they named Claudius, a CEO who they named “Seymore Cash.”

In an official Google-sponsored podcast video, co-founder of Google’s DeepMind Shane Legg considers current models to be showing “more than sparks” of Artificial General Intelligence (AGI). He says that we are on our way to creating machines that will far surpass human intelligence, and discusses what that might mean. I liked his framework of analyzing System 1 vs System 2 thinking of AI models, with Chain-of-Thought monitoring as applying to System 2 and hopefully reinforcing that mode of thinking.

“A Barrister’s Warning” at the Spectator: an English barrister finds that an AI model can do his job better than he can, in less than one tenth of the time and cost.

Something I was thinking a lot about in November, now a NYTimes article: How Tech’s Biggest Companies Are Offloading the Risks of the A.I. Boom. If there is a financial bubble in AI, it is because of fancy ways to hide vulnerabilities to risks, as Michael Lewis, author of The Big Short, analogized here to the 2008 financial crash.

More specifically to the questions of AI safety:

Great writeup at 80,000 Hours on AI-enabled Extreme concentration of power, includes both advice for how you can help solve this problem, and reasons why you should not work on solving this problem. A classic 80,000 Hours approach. (There is also a Forethought podcast/video on the topic here).

Chinese safety evaluations of AI models, including lots of Chinese models that I haven’t heard of, plus the major American ones (and Mistral). It seems like their evaluations don’t include anything relating to “frontier risks” or catastrophic risks, which is okay for a start… of course nobody really knows how to measure that anyways. Yes, the people and ruling govt of China do not want to be harmed by AI, despite speculations to the contrary.

Survey says: Americans are in favor of regulating AI, especially (?) Trump voters

The Guardian reports on extremist groups using AI to boost their propaganda, because of course they will!

RAND Report (with other groups) on Europe and the geopolitics of AGI. Key “findings” include: “The emergence of AGI could fundamentally reshape the global distribution of power” and “Europe currently lacks the strategic awareness, competitive positioning, and policy strategies to navigate a potential transformation to a world with AGI” (well, nobody else is ready for AGI either, but it’s good to get the thinking going).

Anthropic publishes the latest on how well their models are doing in terms of suicide prevention and sycophancy. Good work, as usual.

The above study by Anthropic used Petri, but Anthropic also released a new tool for evaluating automated model behaviors called bloom. I have no way of evaluating this or comparing it to other open-source methods for LLM safety evaluations like Plexiglass or Open-prompt-injection, but hopefully some time next year I’ll get a chance to explore those.

In the world of AI x Biology and/or health tech:

Paper published on Creating Enforceable Biosecurity Standards for Nucleic Acid Providers.

Nvidia: How to Train Scientific Agents with Reinforcement Learning

GovAI published what I believe is the most comprehensive article to date on AI capabilities and the risk of bioterrorism. Hopefully in another few weeks I will finally publish my own summary of this field as a follow-up to Part 1, here.

Pre-print from the lab of Nobel laureate Jennifer Doudna demonstrates the success of an AI model for designing guide RNA nucleases (such as used in CRISPR systems) for more efficient gene editing. (I think; I haven’t read the whole paper but I assume it will be published in a journal in another few months).

There has been enough talk about AI and biosecurity (in particular, intentional harm from malicious use of synthetic biology) that now we can have meta-analysis of papers discussing this problem. The finding: “existing governance remains fragmented”

An opinion published in Nature claims that the first AI-designed “drugs” will not be small molecules, but antibodies (the author doesn’t frame it that way precisely).

And about biological risks to life on our planet:

Really excellent white paper from the Center for Long Term Resilience showing that it would be more cost-efficient for the UK to mandate screening of DNA synthesis, instead of having companies volunteer to do so.