Five Things: Dec 6, 2025

CDC and vaccines, AI persuasiveness, blogs by OpenAI and NIST, concerns around DeepSeek

Five things that happened/were publicized in the past week in the worlds of biosecurity and technology:

CDC meeting on vaccines reverses older recommendations

Huge paper on AI persuasiveness just published

OpenAI starts a blog on AI safety research

NIST’s CAISI starts a blog on AI safety research

DeepSeek, without the safety testing

1) CDC panel removes recommendation for Hep B vaccine

This week, the United States’ Center for Disease Control held their Advisory Committee on Immunization Practices, or ACIP, which convened to discuss the national recommended vaccine schedules. These recommendations really matter, not just because the CDC, in theory, should be seeking to “Control Disease,” but also because private medical insurance in the U.S. must cover the costs of vaccines that have ACIP approval.

The meetings this week were quite something. At least there were no physical altercations. By the end of it all ACIP made their (Trump approved) recommendations, which included (among other things), reversing the 35-year old position recommending the Hepatitis B vaccine for newborns, which acted as a safety net in case of parent-to-child transmission. They are not saying not to vaccinate, just that it should be a choice, an “Individual-Based Decision” (which it always was; obviously any parent who objected to the vaccine was always allowed to opt out). The actual implications of this decision for health insurance is still unclear.

If you are curious about some of the evidence and rhetoric around vaccine safety, you can check out the resources put together by The Evidence Collective, which put together a research publication-based response to some of the statements made in those meetings.

The public health bloggers are in mourning, catastrophizing, or worse.

2) Change My Mind, AI Edition

A paper in Science (one of the world’s top scientific journals) was just published on the persuasiveness of LLMs. The study was enormous, using 76,977 participants (crowdsourced from the UK), 19 open- and closed-source LLMs, 707 issue topics and 466,769 specific claims made by the LLMs across their conversations that were fact-checked by a combination of human and AI methods.

They found a number of interesting things, well beyond the headline of “LLMs were able, on average, to shift people’s political opinions.” Higher-parameter models (the ones that had cost more, computationally, to train) were more persuasive, but post-training “applied to a small open-source model (Llama-8B) transformed it into an as- or more-effective persuader than frontier model GPT-4o.” Another interesting result: “AI models were most persuasive when they packed their dialogue with information,” as in, the more facts that they claimed to try to win over their human conversation partner, the more persuasive they were. That’s good news for people who believe in facts! The bad news is, “models with the highest information density [i.e. highest number of claims that could be fact-checked] also tended to be less accurate on average.”

3) OpenAI tries some more transparency

OpenAI says that they are super 100% into being safe and aligned, and so they are starting a new blog:

This blog is meant for ideas that are too early, too narrow, or too fast-moving for a full paper. Here, we aim to share work that otherwise wouldn’t have been published, including ideas we are still exploring ourselves. If something looks promising, we’d rather put it out early and get feedback, because open dialog is a critical step in pressure testing, refining, and improving scientific work. We’ll publish sketches, discussions, and notes here, as well as more technical pieces less suited for the main blog… You can expect posts from people across the company who are thinking about how to make AI systems safe and aligned. For a future with safe and broadly beneficial AGI, the entire field needs to make progress together.

The first major post combines everyone in AI safety’s two favorite things: sparse autoencoders (SAE) and emergent misalignment. Looks promising! (And is a bit more evidence that emergent misalignment is a function of character personas)

But the very first line of the introductory blog post states:

At OpenAI, we research how we can safely develop and deploy increasingly capable AI, and in particular AI capable of recursive self-improvement (RSI).

So they are indeed working towards recursive self-improvement! This line got a lot of hate, apparently.

4) NIST follows the blogging fad

The National Institute for Standards and Technology, or NIST, is almost certainly the most underrated US government agency (after all, what could be more boring than ‘standards’?) but they have really been doing amazing work for decades, especially in cybersecurity, and most recently, in AI safety. In 2023, the Biden administration created the AISI, which was renamed CAISI (Center for AI Standards and Innovation) by the Trump administration.

Well, starting this week, CAISI now also has a research blog! Two posts up now are about creating better standards for evaluating AI capabilities and risk profiles (hooray standards!) and LLM cheating on their evaluation tests (boo, cheating!)

5) DeepSeek doesn’t care about safety?

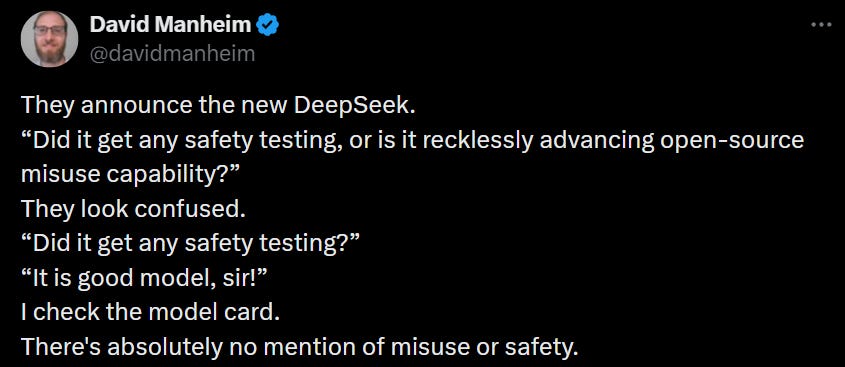

DeepSeek v3.2 was released two weeks ago and is supposedly very good at math, but I haven’t used it so I can’t say how it compares to the latest American closed models. But when it comes to how safe the model is, we have this X post by David Manheim:

Transformer is reporting the same, with more explanation about how this is Very Bad, but doesn’t have any more information other than the fact that safety testing was missing from its model card.

I have no idea if this is true, but just speculating, maybe they did do safety testing, but having very different views/cultural attitudes about what should be shared with the public, they do not plan to publicly discuss this information? Isn’t China hoping to lead on AI safety? I certainly don’t know the answer.

In Other News…

On AI doing things in the world:

In case Gemini 3 Pro wasn’t good enough for you, perhaps consider Gemini 3 Deep Think (released Dec 4)?

Amazon releases a new generation of its LLM, Nova. Also Amazon: 1000 workers sign a petition raising “serious concerns” about the its “aggressive rollout” of these AI tools that they fear will replace workers.

Joe Rogan interviews Jensen Huang (CEO of Nvidia). Rogan asks some of the good questions, like, “what if AI becomes smarter than humans but doesn’t care about us” and “if AI gets so super smart won’t it take all the jobs”? Huang’s answer is basically, “nah, that won’t happen.”

More specifically to the questions of AI safety:

OpenAI announces a new research paper about how to reduce LLM hallucinations/lying by making it confess to doing so. The response to this was mixed. I know some folks who are working on a much better idea (which seems obvious in retrospect) that I hope will be published in a few weeks.

Governor Ron DeSantis (Florida) announced a proposal “to protect consumers by establishing an Artificial Intelligence Bill of Rights for citizens.”

Two prominent bloggers (here and here) talk AI safety and “the race with China” to Artificial Superintelligence (or General Intelligence).

I am somewhat optimistic about third-party evaluations of AI models, that can be used as part of a licensing system or something that can allow for regulatory markets to emerge as a way for managing AI-associated risks. Another step in that direction,

Anton Leicht has a good critique of Anthropic, that they should stop trying to be more than one thing. I am not sure if their ‘transparency advocacy’, for example, would be more effective if spun off from the company, but worth considering.

A few reports of a South Korean safety institute saying that they found a “jailbreak” for Gemini 3 Pro and got it to tell them how to make explosives and biological weapons. I don’t think this is really news, considering that jailbreaks are not that hard to find, even just a day or two from the release of a new model.

In the world of AI x Biology and/or health tech:

Excellent article at Lawfare detailing the question of biosecurity from a US export controls perspective, arguing that we often need to share information publicly or at least with allies—but this can run into prohibitions that are designed to stop potentially hazardous bioweapons from leaving the US. (Unrelated, but just because I mentioned Lawfare: they also just published a review of Yudkowsky/Soares’ book)

Harvard (and other Boston plus Taiwan) researchers found some really promising results in getting LLMs to help with systematic literature reviews. These kinds of reviews are a critical part of how basic research gets summarized so that healthcare providers (but not the CDC, apparently) can find out what is the “scientific consensus.” They are also very labor intensive, and the authors of the paper found that AIs can do a great job… but with a lot of extra rigging.

That was last week. This week, a team from Milan publicized a pre-print showing that the LLMs make frequent mistakes (of course, the models that they used were no longer state of the art by last week). Seems like there really is room here for a specialized medical-research AI.

In theory, you could think of biology as something like: genomes → DNA → RNA → single protein → metabolic function → coordination → entire cell. There are powerful AI tools for most of the steps here, but surprisingly few for RNA. This week we have gRNAde (yes!) “an RNA language model” that “provides an experimentally validated, open-source platform for automated design of complex RNA structures, paving the way for fully programmable RNA catalysts and nanostructures.” This is really exciting, not only because RNA modeling is very hard and because RNA is important, but also because RNA is basically the only biological molecule that can catalyze its own synthesis, that can make copies of itself without the help of other specialized molecules.

And about biological risks to life on our planet:

Nice op-ed at Bulletin of the Atomic Scientists on improving genetic engineering forensics: for any biological agent, if it was engineered artificially, how can we tell by whom? Every lab leaves a trace, and AI will likely be helpful in detecting it.

Robert Redfield, CDC director appointed by first-term Trump who supervised its disastrous COVID-19 response, was interviewed on Fox News to tell everyone that the reason for these failures was because the CDC refused to believe that the virus came from a lab leak and that the CDC wasn’t Trump/MAHA enough. He is also really worried about biosecurity and the possibility of bioterrorism.

Sometimes-overly-sensational writer Annie Jacobson has written a book about biological warfare. It is not due to come out until the summer, but when it does, maybe she’ll be on the Joe Rogan podcast and scare the ***** out of some key members of the Trump administration.

Israel does not really have a biosafety/biosecurity framework, according to a new State Comptroller Report, and so there are no real laws or regulations to ensure that pathogens do not end up in the wrong hands. Good thing there nobody in the Middle East is interested in committing acts of violence.