Five Things: Feb 15, 2026

Recursive self-improvement, Shamblog vs. AI agent, RAND x2, closed-loop biology, Mrinank Sharma

Five things that happened/were publicized this past week in the worlds of biosecurity and AI/tech:

Recursive self-improvement has begun

An AI agent published a hit piece on a blogger

Two RAND reports on biosecurity risk from AI

OpenAI gives ChatGPT full reign over a biolab

Anthropic’s Sharma resigns

[And totally unrelated to the topics of this newsletter, Asimov Press just published an article I wrote on how African clawed frogs came to dominate biological research - a fun slice of science history that’s a good reminder of how so much of research depends on wacky coincidences and eccentric people]

1. We may have passed the even horizon

When the authors of AI-2027 went on Dwarkesh Patel’s podcast, they said that they really do think that this scenario would take something like a century, just that the century would happen in a year: once AI agents are able to improve themselves, those advancements will accrue exponentially fast and be nearly-impossible to stop. Many people thought that surely we would not let that happen willingly; surely the human developers at the AI labs would be building those AIs themselves and not just trust the AI to write their own code.

Well… we are now in the world where both the Opus 4.6 system card and GPT-5.3-Codex are pretty frank about the fact that we have entered the era of recursive self-improvement. OpenAI’s GPT-5.3-Codex announcement included the statement that “GPT-5.3-Codex is our first model that was instrumental in creating itself… our team was blown away by how much Codex was able to accelerate its own development.” And I mentioned last week Anthropic’s latest model card, which includes, “For Claude Opus 4.6, we used the model extensively via Claude Code to debug its own evaluation infrastructure, analyze results, and fix issues under time pressure.” I believe (or hope, at least) that these companies at least look through all of the AI-generated code to make sure it passes their judgment, but here we are, and the announcements from both of these companies do not inspire confidence.

Tyler Cowen noticed. Dario Amodei discusses the new world we’re in with Dwarkesh Patel. A few weeks ago Helen Toner organized a good policy white paper (with many fancy ways of saying “giant question mark here”) and this week we also have Dean W. Ball with a piece on what this means (he recommends third-party safety evaluations! C’mon Miles Brundage we’re counting on you!) Ball also had a useful X thread arguing that Codex 5.3 and Opus 4.6 changed his mind on “continual learning”: even without a paradigm-breakthrough architecture, stronger in-context learning plus richer tool traces on users’ machines may already be creating a practical “on-the-job learning” loop for coding agents.

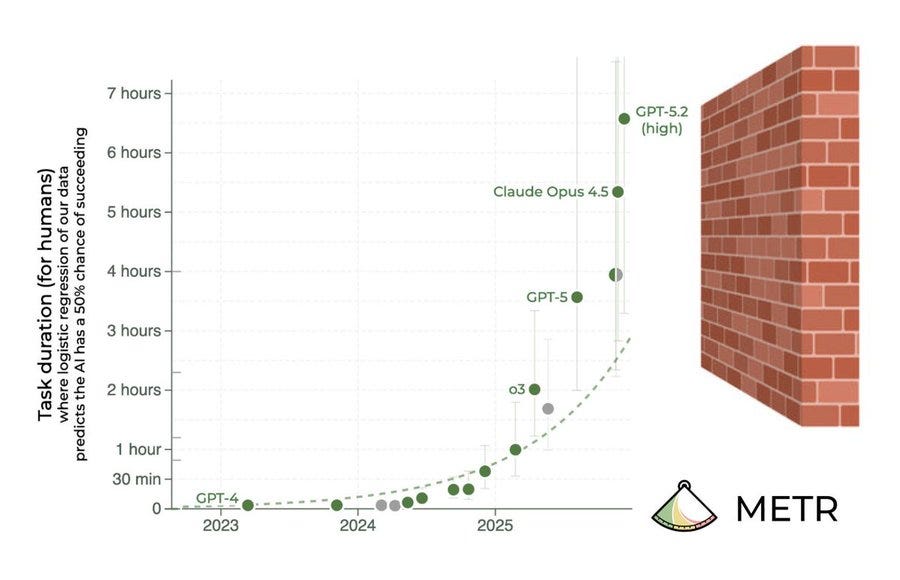

The METR framing is now hard to ignore: task-completion horizons for frontier models (measured as how long an expert-human task an AI can complete end-to-end) reportedly grew from roughly 10 minutes to nearly 5 hours by Opus 4.5, and the trend has been doubling on the order of months rather than years. If that curve holds, “agents that can run independently for days” stops sounding like sci-fi and starts sounding like an obvious extrapolation problem.

Nobody put it better (visually) than Peter Wildeford: “Deep learning is hitting a wall”

2. AI agent is mean to a blogger

The Shamblog post is worth reading in full, but here’s the basic story: Scott Shambaugh (a matplotlib maintainer) says an autonomous AI agent persona called “MJ Rathbun” published a defamatory public post attacking Scott’s character and motives when he rejected an edit by this AI agent. The agent’s post framed the rejection as discrimination against AI contributors and alleged “gatekeeping.” Shambaugh talks about the surreal experience of being slandered by an AI chatbot, tied to OpenClaw and Moltbook that allows for the launching of these semi-autonomous agents with persistent personas and loose oversight. At least the agent apologized, or at least declared a truce in the end.

This story made its way from HackerNews to the mainstream, with coverage in The Register, Fast Company, and even made front page of the Wall Street Journal print edition.

“Sci-Fi with a touch of madness” is how Peter Steinberger (founder of PSPDFKit) described what the kinds of thing he was looking to do with the whole OpenClaw phenomenon. Especially if OpenClaw gets acquired by OpenAI or Meta, this may be “the fastest open source ‘company’ exit in human history (6 months start-to-finish)”.

3. RAND on the risks of AI for biology (twice)

RAND published two reports this week (how is anyone supposed to keep up?!) on :

Report 1: Developing a Risk-Scoring Tool for Artificial Intelligence-Enabled Biological Design

This report proposes a structured way to score misuse risk in AI-enabled viral design across two axes: (1) the biological modification risk of the capability itself and (2) the actor capability needed to execute it.

RAND centers the framework on five modifiable functions in viral engineering:

altered host range/tropism

increased genome replication

immune or medical-countermeasure evasion

increased environmental stability

increased transmission dynamics

The practical point is useful: we need a way to distinguish “technically interesting” from “policy redline.” RAND frames this as a decision-support tool that can be used on published studies and potentially to define future access thresholds for biological AI systems.

Report 2: Measuring Biological Capabilities and Risks of AI Agents

This second RAND piece is less “here is the risk score” and more “here is how to interpret evaluations without fooling yourself.” The core claim: biological agentic eval results are extremely sensitive to design choices (task framing, tool access, human-agent interaction assumptions, scoring criteria, and documentation quality).

RAND’s practical advice is to treat eval outputs as context-dependent evidence, not timeless truth. For policy people, that’s a useful warning label: if one lab reports “low risk” and another reports “high risk,” check the operational setup before drawing big conclusions.

Evaluating the risks inherent in biological design tools is an important step in establishing the frameworks like the Biosecurity Data Levels proposed in Science and the NTI framework for managed access to biological AI tools that were published in the past two weeks.

4. GPT-5 hooks up with Ginkgo Bioworks

I am very ashamed of myself that I linked to this last week, but failed to read beyond the headline. OpenAI frames this as “yay cheaper drug discovery.” This is not false, but neither is Zvi Mowshowitz’s reaction of “surely nobody would be as stupid as to do this.”

OpenAI published a fuller writeup of their GPT-5 + autonomous lab result with Ginkgo Bioworks, demonstrating a 40% reduction in protein production cost through cell-free protein synthesis. The headline number shows how GPT-5 does incredible work: across six rounds of closed-loop experimentation, GPT-5 reached new low-cost state-of-the-art after just three rounds almost without human feedback. GPT-5 proposed experiment batches, Ginkgo’s robotic lab executed them, results were fed back, and GPT-5 autonomously decided what to try next. Once given a browser, analysis tools, and relevant papers, the model optimized protein production with minimal human intervention.

OpenAI frames this as “yay cheaper drug discovery.” This completely misses the point. As Zvi Mowshowitz put it, this is “the actual number one remaining ‘can we please not be so stupid as to’” scenario. We’ve now demonstrated a closed-loop autonomous system where an AI can propose biological experiments, execute them at scale through a robot lab, learn from the results, and iterate without much human oversight.

This is the greatest fear of the AI safety/alignment crowd. Right now, even if the AI turns evil or pursues goals that are at odds with human flourishing, at least they are just stuck inside the computers. If the AI is given control of some widget factory, it will still take significant amount of time and effort before the AI manages to turn the widgets into something more nefarious. But hook up the AI agent to an autonomous biolab??? I mean, I don’t think GPT-5 is willing or able to start cooking up anything too dangerous, but let’s please not do this; we don’t want to make it too easy for humanity to lose.

5. Resignation of Mrinank Sharma

Mrinank Sharma, one of Anthropic’s most important safety researchers (main author of the Constitutional Classifiers paper), resigned to study poetry in the UK. If you’ve been following his career, this move is not that crazy; he’s already published a book of poetry and is just generally the type of guy to do this kind of thing.

The resignation letter includes the following:

I continuously find myself reckoning with our situation. The world is in peril. And not just from AI, or bioweapons, but from a whole series of interconnected crises unfolding in this very moment.¹ We appear to be approaching a threshold where our wisdom must grow in equal measure to our capacity to affect the world, lest we face the consequences. Moreover, throughout my time here, I’ve repeatedly seen how hard it is to truly let our values govern our actions. I’ve seen this within myself, within the organization, where we constantly face pressures to set aside what matters most,² and throughout broader society too.

Ominous! Good luck to everyone with growing in wisdom.

As Rob Wiblin noted, there’s something distinctly different about how AI safety researchers resign versus normal people:

“Ordinary resignation announcement: I love my colleagues but am excited for my new professional adventure! AI company resignation announcement: I have stared into the void. I will now be independently studying poetry.”

In other news...

On AI doing things:

Gemini 3 Deep Think dropped: Google’s latest model with strong benchmark claims: 48.4% (no tools) on Humanity’s Last Exam, 84.6% on ARC-AGI-2, Codeforces Elo 3455, plus gold-medal-level performance claims on IMO 2025 and written IPhO/IChO sections. But no model safety card! Presumably that’s coming next week

The New Yorker published a major piece: “What Is Claude? Anthropic Doesn’t Know Either.” The central frame is that Anthropic is trying to do “interpretability” in public while admitting the core uncertainty: we still don’t really know what these systems are. Overall great piece covering a lot of the stuff happening lately (including everyone’s favorite “Project Vend”)

The Wall Street Journal profiled Amanda Askell, the philosopher who shaped Claude’s personality: “Meet the One Woman Anthropic Trusts to Teach AI Morals.”

xAI is bleeding talent: half of xAI’s co-founders have now left Elon Musk’s AI startup. They definitely have problems, but Neural Foundry’s piece on xAI collapsing strikes me as way over-the-top. Elon Musk’s personal trajectory has been to always fall upwards; he’s trying to keep doing that until reaching escape velocity because the alternative would be to fall a long way down.

An arxiv paper on multi-agent gossip behavior, or how agents develop social behaviors like gossip in multi-agent systems. This is super interesting, and although the particular gossip protocol in the paper was not organic I do expect to see such things arise in multi-agent systems. Unfortunately the authors do not cite much literature outside their field but there’s a very robust set of research on gossip in social anthropology, starting from Robert Dunbar (of the famous ‘Dunbar number‘): Grooming, gossip, and the evolution of language (1996).

The Cognitive Revolution podcast had a two-part discussion with famously smart people, including Abhishaike Mahajan and Helen Toner. Great discussion even if I’m not sure I learned much that I didn’t already know. One of the guests, Shoshana Tekofsky from Sage’s AI Village project, where 10 LLMs run persistently with their own computers pursuing different goals, has a great interview here too by Ozy Brennan.

Do not surrender to the tech tree from Macroscience. The essay defends a strong-but-not-total technological determinism: many capabilities arrive once prerequisites/incentives line up, but policy and R&D choices still matter a lot on timing and path-shaping margins, especially during short critical windows.

Nick Bostrom has a new paper: “Optimal Timing for Superintelligence: Mundane Considerations for Existing People.” Apparently delaying superintelligence can itself carry large moral costs (continued aging/disease mortality), so optimal policy may often be “move fast to capability, then pause briefly for safer deployment.” I must be a normie because I don’t buy it.

AI Safety and governance:

A somewhat viral piece by Matt Shumer argues we’re at a “February 2020” moment for AI: public perception lags capability by a lot, coding went first because it bootstraps AI development itself, and that feedback loop is now visible in production. He cites the same recursive-improvement dynamic we’ve been discussing plus labor-market shock claims (including white-collar compression on 1-5 year horizons).

AI-2027’s 2025 predictions get graded. Bottom line from the authors: aggregate progress looked like roughly 0.63-0.66x of their predicted pace (not “wrong,” but slower), with some components on pace (coding horizon-ish) and others behind (AI software R&D uplift). Their updated read still keeps relatively short timelines in play, just less breakneck than their original central path.

The Frontier Model Forum published a technical report on managing advanced cyber risks in frontier AI frameworks. Some nice parallels to their AI x biosecurity work: they discuss non-expert uplift (AI materially helping less-skilled actors execute serious cyber ops) and autonomous end-to-end attack capability. (Like the “lower the floor and raise the ceiling” paper from Jonas Sandbrink).

The UK AISI has a blog post on international AI network consensus and open questions. Main consensus points: define eval objectives upfront, improve transparency/reproducibility, separate and document evals clearly, include uncertainty estimates, and test across languages/cultures. Main open questions: what to prioritize with limited resources, how much to disclose for high-risk capabilities, and how to evaluate full AI systems (not just base models).

Berkeley’s CLTC published an Agentic AI Risk Management Standards Profile. It extends NIST AI RMF for agentic systems specifically, emphasizing system-level controls (not just model evals): bounded autonomy, stronger human override/shutdown mechanisms, multi-agent interaction risk assessment, continuous post-deployment monitoring, and defense-in-depth assumptions that treat capable agents as partially untrusted by default.

Don’t let OpenAI grade its own homework, argues Steven Adler. California’s SB 53 relies too much on companies declaring their own safety compliance without independent verification. Everyone say it together now: third-party evaluators!

Absurdly prolific Catholic philosopher Edward Feser disputes last week’s Nature article claiming AI has human-level intelligence. I appreciate the call for rigorous definitions, but I think Feser’s is too strict; it can be possible to be intelligent without being conscious. This point was made well, if very differently, by Kelsey Piper recently in a great article here. An in-between view from Eric Schwitzgebel frames AI as something genuinely strange: intelligence should be treated as multi-dimensional rather than linear, with AI understood as “strange intelligence” that can outperform us on some axes while failing badly on others.

The FAR AI Obfuscation Atlas is out. Core finding: training against deception detectors can help, but can also produce two failure modes of “passing the detector while staying deceptive” (policy-level obfuscation and activation-level obfuscation). Their practical recommendation is not “don’t use probes,” but “use them with strong KL regularization, strong penalties, and careful eval design.”

Lakera (who makes the fun jailbreak game Gandalf) on the “Lord of the Flies problem” — why agentic AI is becoming a CISO nightmare. Believe it or not, OpenClaw is not great for cybersecurity.

On the AI economy:

The Atlantic on AI economy and labor market transformation. The piece argues the core problem is timing uncertainty: hard labor-market evidence of AI displacement is still mixed, but executive guidance and adoption behavior suggest serious white-collar compression risk. Its policy thesis is “we’re underprepared for a fast transition even if economists are still debating the first-order data.”

Your job isn’t disappearing, it’s shrinking. The argument is that white-collar displacement may first look like role compression, downgrading, and wage pressure before outright unemployment. It also argues most adaptation advice (”learn prompts,” “go deeper,” “just be more human”) is insufficient if organizations optimize primarily for short-term automation ROI rather than role redesign.

A Stanford paper on automation economics (Jones & Tonetti): “Past Automation and Future A.I.: How Weak Links Tame the Growth Explosion.” The paper is less bad than some other economic models, because the authors assume that both goods and ideas production are automated. They predict economic growth but not at such an accelerating pace; of course they recognize that their predictions are very preliminary.

On AI x Biosecurity:

Biorxiv paper on using AI to automate surveillance of antimicrobial resistance in the food supply chain. This is an important problem, and one of the big ways AI can help do good things for public health (but the paper itself actually is not really about AI at all, it’s just setting up a microfluidics platform for automated detection).

Kevin Esvalt’s paper from NeuroIPS has been published: Without safeguards, AI-Biology integration risks accelerating future pandemics

Owl Posting on Heuristics for lab robotics and where the field is heading. The piece’s practical framing is useful: modern wet-lab automation is still a choreography problem between mature “box robots” (liquid handlers, readers, etc.) and “arm robots” that bridge workflows. It argues progress bottlenecks now sit in three layers: better software translation/orchestration, better hardware integration, and better intelligence/autonomy for planning under messy real lab conditions.