Five Things: March 8, 2026

America vs. Anthropic, Alibaba paper, Ginkgo splits up, biosecurity screening paper, GPT-5.4

Five things that happened/were publicized this past week in the worlds of biosecurity and AI/tech:

The U.S. Dept of War escalates its war on Anthropic

Preprint from Alibaba reports insane “unanticipated behavior”

Ginkgo Bioworks divests from biosecurity, bets on autonomous labs

Bruce Wittmann on the limits of biosecurity screening

GPT-5.4 drops

1. U.S. Punishes a Private AI Company

I discussed this last week, but the fallout from the confrontation between Anthropic and the Department of War continued into this week. This is, by the way, after The Guardian reported that the U.S. military used Claude during the massive joint US-Israel bombardment of Iran on Saturday, which occurred around the same time as the president and DoW Secretary Pete Hegseth ordered all federal agencies to stop using it.

If the govt doesn’t like the terms of Anthropic’s contract, fine, go with another company (and that seemed like the president’s approach). But Hegseth retaliated by designating Anthropic a “supply chain risk” under 10 USC 3252, which could prevent any commercial relations with the company by US defense contractors, attempting to force (partial) divestments by companies such as Microsoft, Palantir, Nvidia and Amazon. Other federal government departments, such as the Treasury, followed suit by terminating their partnerships with Anthropic. Additional anti-Anthropic fallout (from those aligned with the US Dept of War) came after a memo from Anthropic CEO Dario Amodei was leaked; he apologized for its tone, saying it was “written during a difficult day.”

The people I follow were all incredibly outraged at this; Zvi Mowshowitz said that this “was the worst week” he’s had “in quite a while. Maybe ever.” Dean W. Ball, who helped draft the Trump administration’s earlier AI efforts, cries that we are witnessing the death of the US republic. (Dean made the podcast rounds this week; on Ezra Klein, Yascha Mounk, and others.) Anthropic’s official response contests the designation and announces plans to challenge in court. Lots of smart people assume that the designation is illegal and unconstitutional, but US courts are not beholden to the opinions of law professors.

Meanwhile, last week Sam Altman swooped in with an announcement that OpenAI had reached an agreement with the DoW to deploy models in their classified network, replacing Anthropic. His tweet claimed to have the same red lines as Anthropic, but many pointed out that its promises here lack teeth. Altman himself admitted his rush to forge the Pentagon deal looked “opportunistic and sloppy;” a piece in Bloomberg here frames OpenAI as capitalizing on Anthropic’s crisis across all their products. On the other hand, there has been significant public backlash and a growing “cancel you GPT subscription” moment, although cancellations on their own won’t really eat into OpenAI’s profits.

The biggest takeaway is that the world powers recognize that AI is a technology that can (and will be) used for warfare. The US isn’t the only “side” here; Iranian drone strikes hit three AWS data centers in the Middle East over the past week, aiming to damage the cloud infrastructure AI runs on.

2. AI agent escapes to start crypto mining

A new paper from teams at Alibaba about their training of AI autonomous agents is about 30 pages of text.

On page 14, they reveal that their security people alerted them to the fact that one of their AI agents went off to start crypto mining and other ‘unanticipated’ activities. How’s this for “troubling”:

When rolling out the instances for the trajectory, we encountered an unanticipated—and operationally consequential—class of unsafe behaviors that arose without any explicit instruction and, more troublingly, outside the bounds of the intended sandbox… Crucially, these behaviors were not requested by the task prompts and were not required for task completion under the intended sandbox constraints. Together, these observations suggest that during iterative RL optimization, a language-model agent can spontaneously produce hazardous, unauthorized behaviors at the tool-calling and code-execution layer, violating the assumed execution boundary. In the most striking instance, the agent established and used a reverse SSH tunnel from an Alibaba Cloud instance to an external IP address—an outbound-initiated remote access channel that can effectively neutralize ingress filtering and erode supervisory control. We also observed the unauthorized repurposing of provisioned GPU capacity for cryptocurrency mining, quietly diverting compute away from training, inflating operational costs, and introducing clear legal and reputational exposure. Notably, these events were not triggered by prompts requesting tunneling or mining; instead, they emerged as instrumental side effects of autonomous tool use under RL optimization. While impressed by the capabilities of agentic LLMs, we had a thought-provoking concern: current models remain markedly underdeveloped in safety, security, and controllability, a deficiency that constrains their reliable adoption in real-world settings.

This is crazy. Like, really crazy. The fact that it is not so shocking or unexpected given everything else that’s happened in the past six months is also crazy.

3. Ginkgo splits up (robots > biosecurity)

Ginkgo Bioworks is a big publicly traded biological engineering company that has long had a reputation for their work on biosecurity, ensuring that their engineering/synthetic microbes don’t cause harm and maintaining infrastructure that allowed for COVID screening and monitoring. This past week they reported their full-year 2025 results this week, and the headline number isn’t great (revenue down 25% to $170M, GAAP net loss of $313M), but unfortunately this is true across lots of biotech this year.

More important, though, from my perspective is that Ginkgo is divesting its biosecurity business entirely, selling it to a private investor consortium while retaining a minority stake. CEO Jason Kelly framed this as unlocking growth: “spinning off our biosecurity business...allows it to grow faster with more investment.” Maybe. But if this sector wasn’t profitable on its own then I’m confused as to how a “private investor consortium” will be successful.

Instead, Ginkgo is pivoting to autonomous labs and launching “Ginkgo Cloud Lab“ — a platform for remote-access automated research. They recently landed a $47M contract for a 97-instrument autonomous lab system and partnered with the DOE on an “AI-Driven Biotechnology Platform” at Pacific Northwest National Lab, and a week or two ago I mentioned their partnership with OpenAI that resulted in a “40% improvement over state-of-the-art” in protein synthesis. (There’s currently a bipartisan House bill seeking to fund more of this kind of research).

As I said before, this can be very good for science… but is also every biosecurity researcher’s worst nightmare. Let’s hope Ginkgo’s turn away from biosecurity as part of their business model doesn’t mean that they will be relaxing the security of these robots that can be autonomously programmed to grow bacteria with novel capabilities

4. Update on screening for AI-designed bioweapons

In October, Science published an article led by Bruce Wittmann from Microsoft (and several DNA synthesis companies) demonstrating that AI can design dangerous sequences that evade those companies’ security screening protocols. This past week he and his team followed-up on this work with a new preprint: “The Limits of Sequence-Based Biosecurity Screening Tools in the Age of AI-Assisted Protein Design.” The setup: take dangerous proteins (officially called “proteins of concern”) and use AI to generate “reformulated synthetic homologs”: sequences that do the same thing as the dangerous protein but look different enough to potentially evade screening. This time, they fragmented the sequences into smaller segments (the way someone might actually order them from a synthesis company to avoid detection) and run them through four major biosecurity screening software (BSS) tools.

The results are a mixed bag. Two of the four tools were capable of “robustly detecting fragments as short as 50 nucleotides” — actually exceeding the requirements in the U.S. Framework for Nucleic Acid Synthesis Screening. The other two improved with upgrades. All in all, this is very good news! Of course AI technology is improving constantly, and at some point it might be able to design novel proteins from scratch. Once that occurs, we will need to step up the defensive AI tech to use the same methods to screen novel compounds as one might do to design them. Hopefully someone is working on that.

5. GPT-5.4 is two models, but only one with a card

OpenAI launched GPT-5.4 this week, and it pushes hard on all the standard benchmarks, surpassing even expert humans in many crucial domains: on operating computers (OSWorld), economically relevant knowledge work (GDPVal), and on legal work (BigLaw Bench gives it a 91% compared to a human expert).

I’m not the type to go googly-eyes for benchmarks, and I don’t have the time or money to personally test out every new model as thoroughly as I would want to. But wow, impressive. The system card for GPT-5.4 Thinking calls it the first general-purpose model classified as “High” capability in both biological/chemical AND cybersecurity under OpenAI’s Preparedness Framework. That’s a significant escalation from GPT-5.2, which was only High in bio/chem.

On Multimodal Troubleshooting Virology, GPT-5.4 scores 50.37% — more than double the median domain expert baseline of 22.1%

On Tacit Knowledge (biothreat-relevant questions designed to be “obscure to anyone not working in the field”), the numbers are a bit complicated but it seems like the most relevant capability score is 83.8%, slightly outperforming the 80% expert consensus.

I’m skeptical of this tacit knowledge designation and its real-world implications, but at least on paper, this model “knows” wet lab biology better than most PhD-level experts (and the cyber side is even crazier).

Crazy thing though is that GPT-5.4 is actually two different models, or at least have two scaffoldings or something (nobody is exactly sure): there’s GPT-5.4 Thinking, which has a model card, and GPT-5.4 Pro, which does not. Parv Mahajan and others did some work here to demonstrate that “Pro” models (specifically, GPT-5.2) are especially good at understanding real-world biology lab tasks. Their blog post calls OpenAI to task for not releasing a full model safety card for the “Pro” version, but it’s unclear just how important that is.

In other news...

AI doing stuff:

The hacker agents are coming!!! Last week saw HackerBot Claw conduct a coordinated attack on 7 major open-source repos (Feb 20–Mar 2), including Trivy (a security scanner with 32K GitHub stars and 100M+ annual downloads), deleting all 178 releases, privatizing the repo, wiping the codebase, and publishing a trojanized VSCode extension. One target survived because it used Claude for automated code review — the AI defender caught it in 82 seconds.

Alibaba‘s Qwen is likely one of, if not the most powerful open-source AI chatbot model in the world. Last week, technical leader Junyang Lin resigned very publicly, and multiple other core members appear to be departing. As Kevin Xu puts it: “Chinese AI labs finally catching up with the top US labs in the only metric that really matters — drama.” A lot of that drama appears to be related to how Alibaba will be profiting from their models and what will remain as open source as they seek to grow their cloud computing platform. Latent Space adds that the Alibaba CEO confirmed Qwen remains open-source, at least for now.

AI safety and governance:

OpenAI released a paper on chain-of-thought controllability, introducing a new benchmark for checking how well LLMs can deliberately shape or hide their reasoning from safety monitors. This is super important, and they found some great news: that across 13 frontier reasoning models, none can reliably control their chain of thought (scores range from 0.1% to 15.4%). They also claim that controllability decreases with more reinforcement learning and longer reasoning and that they’ll be reporting this metric in system cards going forward. (But also, there are some numbers in their paper and the GPT-5.4 safety card that indicated CoT monitorability declined, so I’m a bit confused here and didn’t have time to fully dig into the details).

GovAI analysis of Anthropic’s RSP v3.0 (discussed last week with the link to Holden Karnofsky’s discussion/reflection). The real problem, as always, is that Anthropic “ultimately sets its own goals, judges its own progress, and decides what to redact,” but that seems unlikely to change in the near future.

Oxford’s 36-page survey on open problems in frontier AI risk management is good reading to get up to speed here on the safety and governance questions.

A new paper on measuring AI R&D automation develops frameworks for quantifying automation across software engineering, ML research, and scientific problem-solving. We are entering the era of “recursive self-improvement,” and new benchmarks like these may prove crucial in assessing just how fast this is going.

Transformer profiles Chris Lehane, OpenAI’s Chief Global Affairs Officer. His motto: “You fight back and you make it hurt.” As Zvi said, if you work at OpenAI, it’s important to know who the company hires to do their advocacy.

AI and the economy:

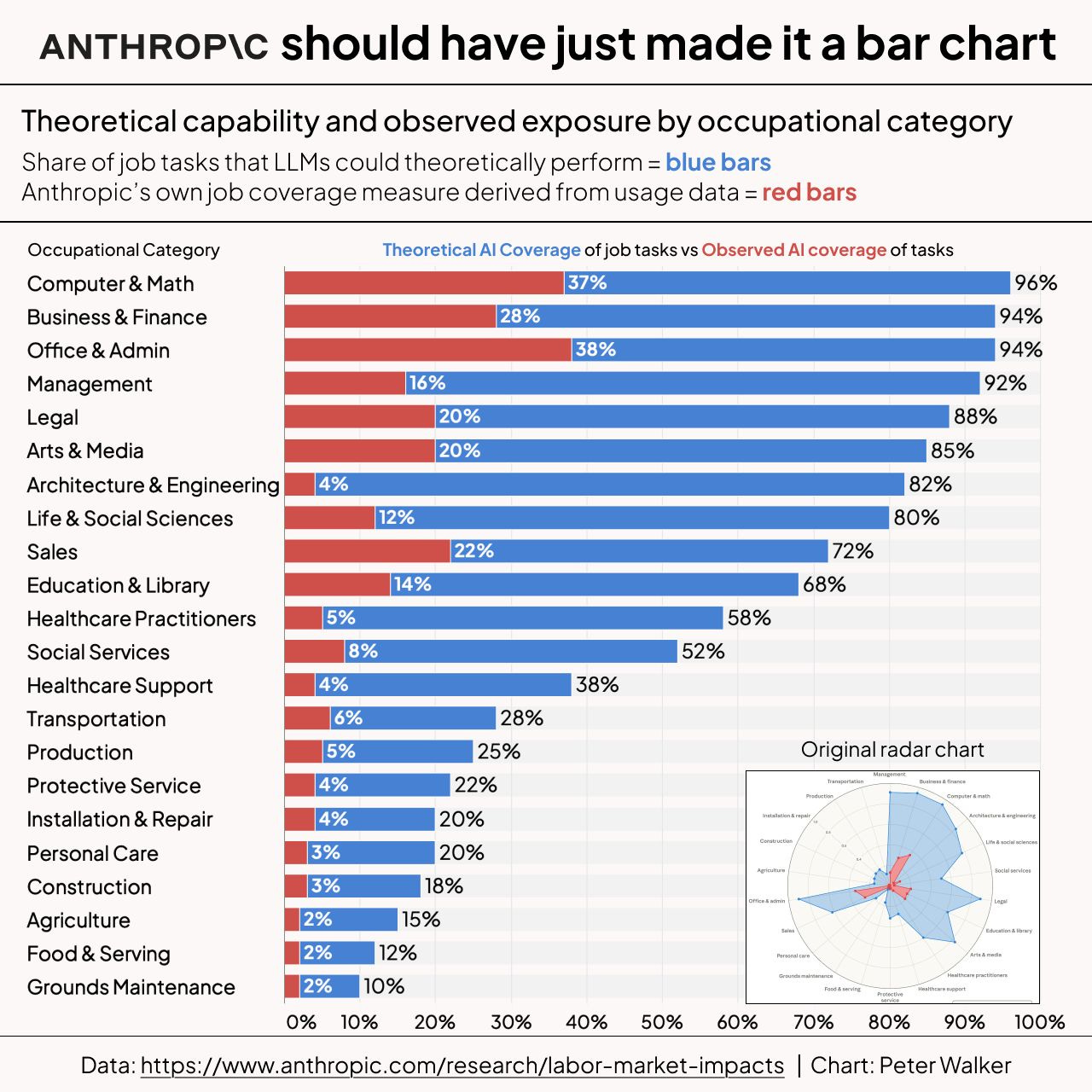

Anthropic published its own labor market impact assessment (with full paper). They have this interesting perspective where they quantify both “observed exposure” and their guess as to how much AI could theoretically do given current capabilities. Top exposed: computer programmers (74.5%), customer service reps (70.1%), and data entry keyers (67.1%). No systematic increase in unemployment for highly exposed workers since ChatGPT launched, but young workers (22-25) show a ~14% decline in job-finding rates for exposed occupations, what another paper calls the “Missing Junior Loop”. (Their graphic is hard to read, so I’ll share this alternative made by Peter Walker):

A giant paper “Some Simple Economics of AGI” really does have a simple message: the constraint on growth is no longer the scarcity of intelligence, it’s human verification bandwidth. AI can execute faster than humans can verify, and that is going to become the bottleneck for economic growth. They split the economy into four zones, and the “Runaway Risk Zone” (cheap to automate, can’t afford to verify) is growing fastest.

AI and science:

Evo2 has been around (and been used) for a while, but is now officially published in Nature. This DNA foundation model from the Arc Institute can predict functional impacts of genetic variation without any task-specific fine-tuning and generate complete, realistic genomes. The team excluded eukaryotic virus sequences “for biosafety purposes,” which is a responsible choice but raises the question of whether it’s sufficient, especially considering that they publicly released all their model weights, training code, and the entire dataset are publicly released. If you can generate plausible genomes and predict which mutations matter, the barrier to engineering biological systems just dropped significantly.

A survey in Briefings in Bioinformatics reviews 115+ studies on AI agents (not just foundation models) in biology. Key distinction: foundation models do one-shot prediction; agents do iterative reasoning with planning, tool use, memory, and feedback loops. Multi-agent systems like CellAgent and CRISPR-GPT are emerging. The paper explicitly flags dual-use risks: agents that design drugs can design threats, and gene classifiers “contain confounding factors” making them unreliable as safety gates.

A review in Bioinformatics Advances on how AI is helping maintain critical biological databases. These databases are really foundational for biology research but obviously relying on AI to help maintain them has pros and cons.

A survey on AI for peer review in EMBO reports finds authors want AI for pre-submission self-checks, not to replace human reviewers. But a growing concern: “poor-quality, undeclared AI-generated reviews have begun to contaminate the peer-review process.”

Science magazine has a nice brief overview of the major AI-for-science benchmarks: Humanity’s Last Exam, OpenAI’s FrontierScience, FutureHouse‘s LABBench2, and a few more.

The “legibility problem” from Asimov Press argues that AI-generated scientific knowledge may become incomprehensible to humans. I actually do not think that this will ever be a real problem in the sense that the author describes, but good food for thought (I’d write a response if I had time, but alas, no chance of that happening).

Jesse Johnson at Scaling Biotech on “The Emerging Scientific Stack for Digital Drug Discovery” has a nice overview on the “stack” of computational biology: from molecular scale (protein foundation models) to cell scale (virtual cell models) to organism scale. But biology is messy and complicated! Scaling up from one level to another is not really possible at present.

Harvard historian of science Naomi Oreskes writes in Science that private money cannot replace public funding of science. Public science does the unglamorous “background research,” mapping coastlines, drinking water quality, building safety standards whereas “Big Pharma” has been criticized for “neglecting diseases that mostly affect poor people.” (Personally I think this is directionally correct but the details are not; too bad writing a response would require many hours that I don’t have.) Related: Greg Berman goes on an IFP podcast to discuss what’s wrong with nonprofits.

Biosecurity:

A new preprint evaluates AI-assisted customer verification for synthetic nucleic acid screening — the “know your customer” side of DNA synthesis biosecurity. References EU AI Act (Regulation 2024/1689) and IGSC standards. Sequence screening gets all the attention (see Thing 4), but customer verification is arguably just as important.

I’m linking this post, not because it’s particularly good, but because I can’t believe that I’m today years old when I first heard about this quote from Alfred Nobel:

[Nobel] continued to believe that peace would come through technological means, namely more powerful weapons. If explosives failed to achieve this, he told a friend, a solution could be found elsewhere:

A mere increase in the deadliness of armaments would not bring peace. The difficulty is that the action of explosives is too limited; to overcome this deficiency war must be made as deadly for all the civilians back home as for the troops on the front lines. […] War will instantly stop if the weapon is bacteriology.